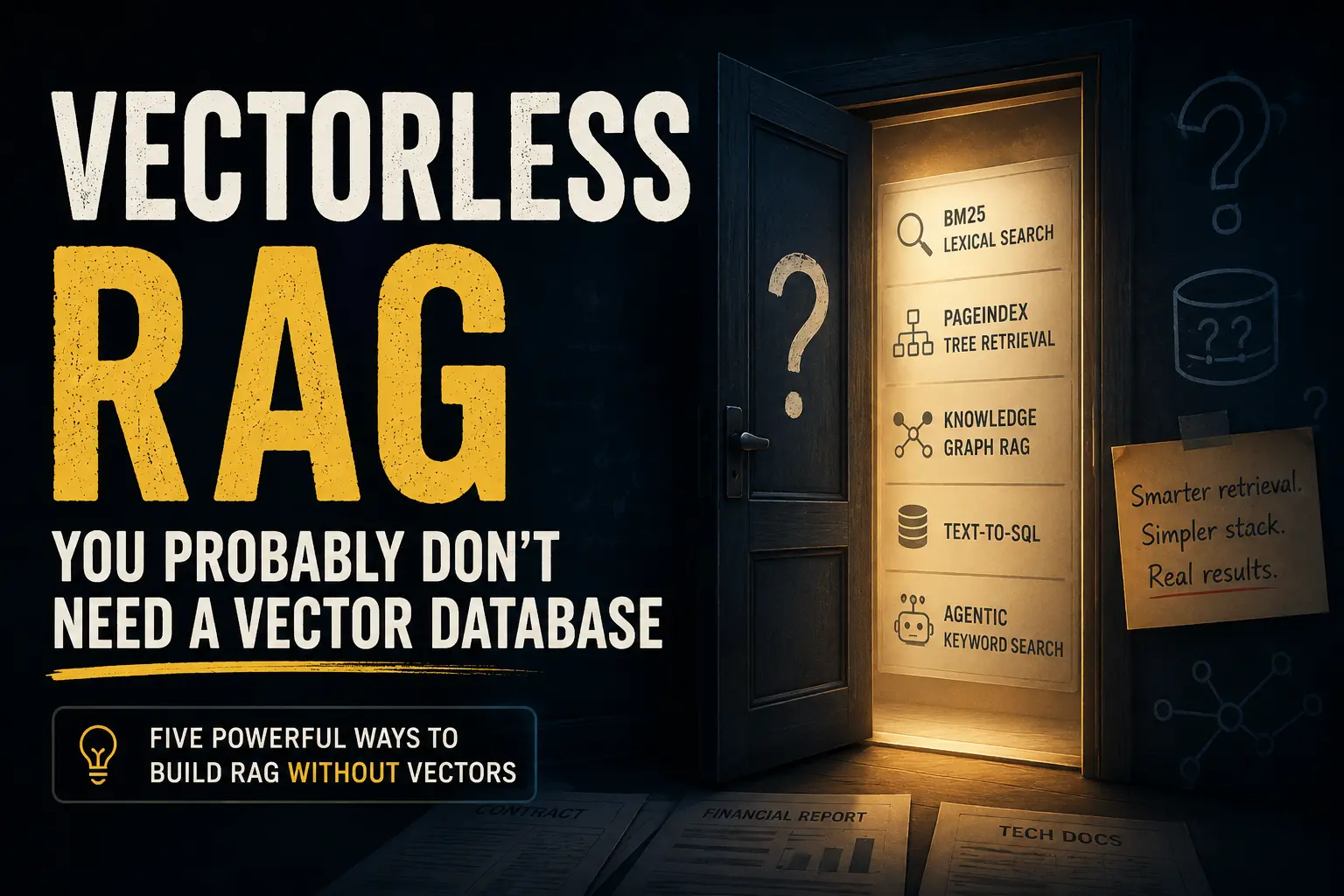

Vectorless RAG: You Probably Don't Need a Vector Database

Posted at 11-May-2026 / Written by Rohit Bhatt

30-sec summary

Everyone building AI features in 2026 seems to follow the same recipe: chunk your documents, push them through an embedding model, store vectors in Pinecone or Weaviate, and call it RAG. But the vector database is often the most expensive, fragile part of the stack — and you don't actually need it. Vectorless RAG is a family of retrieval approaches (BM25, PageIndex, knowledge graphs, text-to-SQL, agentic keyword search) that ground LLM answers in real documents without dense embeddings or approximate nearest-neighbour search. This deep dive covers when to skip the vector stack, the five core vectorless approaches with production Rails code, and the honest trade-offs based on the latest 2025–2026 research.

Introduction

Everyone building AI features in 2025–2026 seems to follow the same recipe: chunk your documents, push them through an embedding model, store vectors in Pinecone or Weaviate, and call it RAG. It works. But here's the uncomfortable question nobody in the tutorial pipeline is asking:

What if the vector database is the most expensive, fragile part of your stack — and you don't actually need it?

That's the premise behind Vectorless RAG: a family of retrieval approaches that ground LLM answers in real documents without dense embeddings or approximate nearest-neighbour search. Not because vectors are bad, but because the blanket assumption that you need them is often wrong.

In this post, we'll unpack what vectorless RAG actually means, why the problem exists, and what the five core approaches look like in practice — with real code for Rails developers and enough depth for AI engineers who've been doing this for years.

First, What Is RAG? (Quick Primer for Newcomers)

If you're already comfortable with RAG, skip ahead to the next section.

RAG stands for Retrieval-Augmented Generation. The idea is simple: LLMs have a knowledge cutoff and can't access your private documents, so instead of fine-tuning an expensive model, you retrieve relevant text at query time and inject it into the prompt.

A basic RAG pipeline looks like this: a user asks a question, a retrieval layer searches your docs for relevant sections, those sections are assembled into context, and an LLM generates an answer grounded in the retrieved text.

The conventional implementation uses vector embeddings for the retrieval layer:

- 1.Embed: Convert every document chunk into a dense numerical vector (embedding).

- 2.Store: Persist those vectors in a specialized database (Pinecone, Weaviate, Qdrant, etc.).

- 3.Encode the query: At query time, convert the question into a vector.

- 4.Search: Find the most semantically similar document vectors via cosine similarity / ANN search.

- 5.Generate: Feed those chunks to the LLM.

This works surprisingly well for many use cases. But it comes with real costs — costs that are easy to ignore when you're building a demo but unavoidable in production.

The Problem: Why Vector RAG Falls Apart in the Real World

Before we talk about alternatives, let's be honest about what's actually breaking. These are not theoretical concerns — they are patterns I've encountered repeatedly in production systems.

1. Chunking Destroys Context

Every RAG tutorial starts with: "Split your documents into 512-token chunks." But documents have structure. A 200-page financial report has sections, subsections, tables, and cross-references. When you slice it into fixed-size chunks, you cut table headers from their rows, separate a disclaimer from the claim it qualifies, and fragment multi-step processes across chunks. The LLM receives a context window that looks like a shredded document — and hallucinates the rest.

2. "Vibe Retrieval" Misses Exact Answers

Embeddings capture semantic similarity, not factual relevance. A query for "NullPointerException in OrderService" will retrieve chunks about error handling in general — because they're semantically close — rather than the one line in your codebase that actually throws the exception.

The same problem appears in finance, law, and medicine. A contract query about "clauses voiding the warranty" may surface the clause establishing the warranty because the vocabulary overlaps. This is what some researchers call vibe retrieval: the results feel right but aren't.

3. Infrastructure Overhead Is Significant

A production vector RAG stack typically requires an embedding model (often GPU-backed, with API latency or infra cost), a vector database (Pinecone, Weaviate, Qdrant — all require ops overhead), re-indexing pipelines every time documents change, and duplicate infrastructure if you're already running Postgres or Elasticsearch. For a team already running Postgres, adding a vector DB for a single RAG feature is like buying a second kitchen because you need to make coffee.

4. Embeddings Fail in Niche Domains

General-purpose embedding models are trained on general text. In specialised domains — Korean Traditional Medicine, legal Latin, financial regulations, medical SOAP notes — the embedding space doesn't reflect domain semantics. A 2024 paper on Korean medicine RAG (arXiv 2401.11246) showed that in niche corpora, embedding similarity correlated with token overlap rather than domain relevance — making general embeddings actively misleading.

5. Debugging Is Opaque

When a chunk surfaces in your retrieval results, why did it surface? A cosine score of 0.73 is not an audit trail. In regulated industries — finance, healthcare, legal — you need to explain why a specific passage was retrieved. Lexical and structural retrieval systems give you that; vector similarity doesn't.

So What Is Vectorless RAG?

Vectorless RAG is any RAG architecture where the retrieval layer does not rely on dense vector embeddings or approximate nearest-neighbour search.

The original RAG paper by Lewis et al. (2020) never mandated vectors. It defined RAG as "augmenting an LLM with retrieved external knowledge." The vector assumption crept in later, driven by benchmark results on open-domain QA — a use case that happens to favour semantic similarity.

Vectorless RAG is the reassertion of a simple point: retrieval can be lexical, structural, symbolic, or LLM-driven — and for many real-world corpora, these approaches outperform vectors.

Approach 1: BM25 / Lexical Retrieval

What it is: BM25 is a ranking algorithm from the 1990s. It scores documents based on term frequency, inverse document frequency, and document length normalization. No neural network. No GPU. No embeddings.

The simplified formula is roughly: BM25 score ≈ Σ IDF(term) × TF(term in doc) / (TF + k₁ × (1 - b + b × doc_length/avg_length)), where IDF (Inverse Document Frequency) gives rare terms a higher score, TF (Term Frequency) measures how often the term appears, and k₁ and b are tuning parameters (typically k₁=1.5, b=0.75).

For newcomers: Think of BM25 as a very smart keyword search. If you search for "warranty void clause", it finds documents that contain those exact words, with higher weight for documents where those words are rare and concentrated.

- 1.Exact identifiers: error codes, SKUs, statute numbers, drug names.

- 2.Code searches: function names, class names, exception types.

- 3.Technical documentation: with precise terminology.

- 4.Literal queries: any domain where the user means exactly what they typed.

Here is a Rails implementation using pg_search:

1# Gemfile

2gem "pg_search"

3

4# Migration - precomputed tsvector column + GIN index for fast search

5class AddSearchableToPosts < ActiveRecord::Migration[8.0]

6 def up

7 add_column :documents, :searchable, :tsvector

8

9 # Populate the searchable column from title + body

10 execute <<~SQL

11 UPDATE documents

12 SET searchable = to_tsvector('english', coalesce(title, '') || ' ' || coalesce(body, ''))

13 SQL

14

15 # GIN index = fast lookup. Without this, every search is a full table scan.

16 add_index :documents, :searchable, using: :gin

17 end

18end

19

20# Model

21class Document < ApplicationRecord

22 include PgSearch::Model

23

24 pg_search_scope :search_for_rag,

25 against: { title: "A", body: "B" }, # Title matches weighted higher

26 using: {

27 tsearch: {

28 dictionary: "english",

29 prefix: true, # "refund" matches "refunding"

30 tsvector_column: "searchable"

31 },

32 trigram: { threshold: 0.3 } # Handles typos gracefully

33 }

34endPro Tip: pg_search's default ts_rank is not true BM25 — it lacks IDF weighting and term-frequency saturation. For RAG-grade ranking, use ParadeDB's pg_search extension (BM25 via Tantivy) or Tiger Data's pg_textsearch.

For non-Rails teams, here's the Python equivalent using the much faster bm25s library:

1from bm25s import BM25 # bm25s: orders-of-magnitude faster than rank_bm25

2

3# Index your documents once

4corpus = [doc["text"] for doc in documents]

5retriever = BM25()

6retriever.index(BM25.tokenize(corpus))

7

8# Query at runtime - no GPU, no API calls, no embeddings

9results = retriever.retrieve(BM25.tokenize(["warranty void clause"]), k=8)Approach 2: PageIndex — Reasoning-Based Tree Retrieval

What it is: This is the approach that put "vectorless RAG" on the map in 2025. Developed by VectifyAI, PageIndex reframes retrieval as tree search over a generated table of contents.

For newcomers: Imagine you're a lawyer reviewing a 300-page contract. You don't read every word — you flip to the table of contents, find "Termination Clauses," turn to page 187, and read that section. PageIndex teaches an LLM to do exactly that.

The pipeline has two phases. Phase 1 (Ingestion): an LLM walks the document and summarizes each section into a JSON tree of nodes (id, title, summary, page range). Phase 2 (Query): the LLM reads only the tree summaries (not the full document), picks the most relevant node IDs, and the system fetches raw text for only those nodes.

The benchmark that made headlines: VectifyAI's Mafin 2.5, built on PageIndex, scored 98.7% accuracy on FinanceBench — a financial QA benchmark — versus ~31% for standard GPT-4o RAG. That's a meaningful gap.

Honest caveat: This benchmark is vendor-published on a single-document, hierarchically rich dataset. Independent research (arXiv 2511.18177) testing across 1,200 SEC filings found vector RAG with reranking beat tree-based approaches on noisy multi-document corpora. Both findings can be true — PageIndex wins on structured documents, vectors win on noisy large corpora.

Here's how to integrate PageIndex into Rails by calling its API from a background job, then traversing the tree at query time:

1# Background job - build tree on document upload

2class BuildPageIndexJob < ApplicationJob

3 queue_as :default

4

5 def perform(document_id)

6 document = Document.find(document_id)

7

8 response = Faraday.post("https://api.vectify.ai/v1/index") do |req|

9 req.headers["Authorization"] = "Bearer #{ENV['VECTIFY_API_KEY']}"

10 req.body = {

11 document_url: document.file_url,

12 document_id: document.id.to_s

13 }.to_json

14 end

15

16 tree = JSON.parse(response.body)

17 # Store the tree in a jsonb column for fast query-time traversal

18 document.update!(page_index_tree: tree, indexed_at: Time.current)

19 end

20end

21

22# Query-time retrieval

23class PageIndexRetriever

24 def retrieve(document, question)

25 tree_json = document.page_index_tree.to_json

26

27 # Step 1: LLM selects relevant node IDs from the tree summaries

28 node_ids = select_nodes_from_tree(tree_json, question)

29

30 # Step 2: Fetch raw text for only those nodes

31 fetch_node_content(document, node_ids)

32 end

33

34 private

35

36 def select_nodes_from_tree(tree_json, question)

37 client = OpenAI::Client.new

38 response = client.chat(parameters: {

39 model: "gpt-4o-mini",

40 messages: [

41 { role: "system", content: "Given the document tree, return a JSON array of node IDs most relevant to the question. Return ONLY valid JSON." },

42 { role: "user", content: "Tree: #{tree_json}\n\nQuestion: #{question}" }

43 ]

44 })

45 JSON.parse(response.dig("choices", 0, "message", "content"))

46 end

47endApproach 3: Knowledge Graph RAG

What it is: Instead of chunks, your knowledge is stored as a graph of entities and relationships. Retrieval becomes graph traversal — no similarity search required.

For newcomers: Instead of storing "The CEO of Acme Corp is Jane Smith, who previously worked at TechCorp," you store nodes (Jane Smith, Acme Corp, TechCorp) connected by typed edges (IS_CEO_OF, PREVIOUSLY_WORKED_AT). Now the question "Who leads Acme Corp?" is just a graph lookup, not a semantic search.

- 1.Microsoft GraphRAG (arXiv 2404.16130): Extracts entities and relationships with an LLM, runs community detection (Leiden algorithm) to find clusters of related entities, generates community summaries, and answers "global" questions by doing a map-reduce over those summaries. The key insight: vector RAG is great for "find the chunk about X" but terrible for "give me an overview of how all parts of this domain relate" — GraphRAG handles that second query type.

- 2.Schema-driven Knowledge Graphs (Neo4j, Amazon Neptune): When your data is already relational — products, customers, contracts, regulations — you can represent it as a knowledge graph and query with Cypher or SPARQL. The LLM converts the user's question to a graph query, no embeddings needed.

Best for: Enterprise knowledge management, multi-hop reasoning ("Which clients use the feature that depends on the deprecated API?"), regulatory compliance, drug interactions, organisational hierarchies.

Approach 4: Text-to-SQL (Structured Data RAG)

What it is: The LLM generates SQL to query your relational database directly. If your "documents" are structured data — transactions, orders, CRM records — this is often strictly better than any retrieval approach.

For newcomers: Instead of "retrieve chunks about Q1 revenue → feed to LLM → hope it gets the math right," you do: user asks "What was total Q1 churn by plan?" → LLM writes SQL → execute against Postgres → return precise numbers → LLM explains them.

Here's a Rails example with an LLM-generated query:

1class TextToSqlRag

2 SCHEMA_CONTEXT = <<~SQL

3 -- Tables available:

4 -- subscriptions(id, user_id, plan, status, cancelled_at, created_at)

5 -- users(id, email, company_name, created_at)

6 -- invoices(id, subscription_id, amount_cents, paid_at, period_start, period_end)

7 SQL

8

9 def answer(question)

10 # Step 1: LLM writes the SQL

11 sql = generate_sql(question)

12

13 # Step 2: Safety check - only allow SELECT statements

14 raise SecurityError, "Only SELECT queries permitted" unless sql.strip.upcase.start_with?("SELECT")

15

16 # Step 3: Execute and format results

17 results = ActiveRecord::Base.connection.execute(sql)

18 rows = results.map(&:to_h)

19

20 # Step 4: LLM explains the data

21 explain_results(question, rows)

22 end

23

24 private

25

26 def generate_sql(question)

27 client = OpenAI::Client.new

28 response = client.chat(parameters: {

29 model: "gpt-4o",

30 messages: [

31 { role: "system", content: "Generate PostgreSQL SELECT queries only. Schema:\n#{SCHEMA_CONTEXT}\nReturn ONLY the SQL. No explanation, no markdown." },

32 { role: "user", content: question }

33 ]

34 })

35 response.dig("choices", 0, "message", "content").strip

36 end

37endBest for: Quantitative questions over structured data, business intelligence queries, any use case where exact numbers matter (financial reporting, usage analytics, sales dashboards).

Approach 5: Agentic Keyword Search

What it is: Expose keyword/BM25 search as a tool for the LLM. The model issues searches, reads results, refines its query, and iterates — just like a human researcher would.

This is the approach validated by AWS researchers in their 2026 paper "Keyword search is all you need" (arXiv 2602.23368). The finding: an agent with keyword search tools achieves >90% of the answer quality of vector RAG — without a vector database.

The key insight is that the LLM compensates for lexical search's limitations (synonym gap, query dependency) through multi-step reasoning. It doesn't get the answer wrong because its search missed a synonym — it retries with a different keyword.

1# Define the search tool for your LLM

2SEARCH_TOOL = {

3 type: "function",

4 function: {

5 name: "search_documents",

6 description: "Search the knowledge base using keyword search. Use specific technical terms. You can call this multiple times with different keywords to find all relevant information.",

7 parameters: {

8 type: "object",

9 properties: {

10 query: { type: "string", description: "Keyword search query - be specific" },

11 limit: { type: "integer", description: "Number of results (1-10)", default: 5 }

12 },

13 required: ["query"]

14 }

15 }

16}.freeze

17

18class AgenticKeywordRag

19 MAX_ITERATIONS = 5 # Prevent infinite loops

20

21 def answer(question)

22 messages = [

23 { role: "system", content: "You are a helpful assistant. Use the search_documents tool to find relevant information before answering. Search multiple times with different keywords if needed." },

24 { role: "user", content: question }

25 ]

26

27 MAX_ITERATIONS.times do

28 response = call_llm(messages, tools: [SEARCH_TOOL])

29 choice = response.dig("choices", 0)

30

31 # If the LLM calls a tool, execute it and continue the loop

32 if choice.dig("message", "tool_calls")

33 tool_call = choice.dig("message", "tool_calls", 0)

34 args = JSON.parse(tool_call.dig("function", "arguments"))

35

36 # Execute the BM25 search

37 results = Document.search_for_rag(args["query"]).limit(args["limit"] || 5)

38 context = results.map { |d| "**#{d.title}**\n#{d.body}" }.join("\n\n---\n\n")

39

40 # Feed results back to the LLM and continue

41 messages << choice["message"]

42 messages << {

43 role: "tool",

44 tool_call_id: tool_call["id"],

45 content: context

46 }

47 else

48 # LLM has finished - return the final answer

49 return choice.dig("message", "content")

50 end

51 end

52 end

53

54 private

55

56 def call_llm(messages, tools: [])

57 OpenAI::Client.new.chat(parameters: {

58 model: "gpt-4o-mini",

59 messages: messages,

60 tools: tools

61 })

62 end

63endVector vs. Vectorless: The Honest Comparison

There is no universal winner. Here's the framework I use when architecting a RAG system — for each signal, lean one way or the other:

- 1.Document structure: Hierarchical (contracts, reports, manuals) → vectorless. Flat, paragraph-based → vector.

- 2.Query type: Exact terms, identifiers, codes → vectorless. Conceptual, synonym-heavy → vector.

- 3.Corpus size: Single or few long documents → vectorless. Thousands of short documents → vector.

- 4.Domain: Niche / specialised → vectorless. General / open-domain → vector.

- 5.Update frequency: Frequent → vectorless. Infrequent → vector.

- 6.Existing infra: Already on Postgres/ES → vectorless. Greenfield, vector DB acceptable → vector.

- 7.Auditability needed: Yes → vectorless. Not required → vector.

The production default in most enterprises in 2026: hybrid. Run BM25 and dense retrieval in parallel, combine their rankings using Reciprocal Rank Fusion (RRF). Postgres now supports this natively with pg_textsearch (BM25) and pgvector (dense) in the same query:

1-- Hybrid RAG retrieval: BM25 + dense, fused via RRF

2WITH bm25_results AS (

3 SELECT id,

4 row_number() OVER (ORDER BY paradedb.score(id) DESC) AS rank

5 FROM documents

6 WHERE body @@@ 'warranty void clause' -- BM25 search (ParadeDB syntax)

7 LIMIT 50

8),

9vector_results AS (

10 SELECT id,

11 row_number() OVER (ORDER BY embedding <=> $1) AS rank -- $1 = query embedding

12 FROM documents

13 LIMIT 50

14)

15SELECT

16 COALESCE(b.id, v.id) AS id,

17 (1.0 / (60 + COALESCE(b.rank, 1000))) +

18 (1.0 / (60 + COALESCE(v.rank, 1000))) AS rrf_score

19FROM bm25_results b

20FULL OUTER JOIN vector_results v ON b.id = v.id

21ORDER BY rrf_score DESC

22LIMIT 10;For newcomers: RRF (Reciprocal Rank Fusion) is a simple formula: 1 / (60 + rank). If a document ranks 1st in BM25, it gets score 1/(60+1) ≈ 0.016. If it also ranks 3rd in vector search, it gets an additional 1/(60+3) ≈ 0.016. Sum both. The document that ranks well in both systems rises to the top. The constant 60 dampens the importance of rank differences at the top.

What the Research Says in 2026

The research picture has become more nuanced in the past year.

Supporting vectorless / lexical approaches:

- 1.ELITE (arXiv 2505.11908, 2025): Iterative LLM-based retrieval outperformed embedding baselines on long-context QA at >10× lower storage overhead.

- 2."Keyword search is all you need" (AWS, arXiv 2602.23368, 2026): Agentic keyword search achieves >90% of vector RAG quality.

- 3.Prompt-RAG (arXiv 2401.11246, 2024): In niche domains, LLM-based section selection outperformed embedding retrieval.

- 4."The Semantic Illusion" (arXiv 2512.15068, 2025): Embedding-based hallucination detectors had 88–100% false-positive rates on real RAG outputs; LLM reasoning-based judges dropped that to 7%.

Supporting vector / hybrid approaches:

- 1."Rethinking Retrieval" in Finance (arXiv 2511.18177, 2025): Testing on 1,200 SEC filings, vector RAG with cross-encoder reranking achieved a 68% win rate over hierarchical tree-based approaches. On noisy, multi-document corpora — vectors still win.

The reconciliation: Both camps are right about different workloads. Single, structured, hierarchy-rich documents → vectorless. Noisy, large, multi-document corpora → vector + reranker. The industry is converging on hybrid as the safe default.

Common Misconceptions (Don't Get Caught Here)

- 1."Vectorless means cheaper per query": Not necessarily. PageIndex-style tree traversal involves multiple sequential LLM calls per retrieval — which can cost more per query than a single embedding API call. The savings are in infrastructure (no vector DB), not always in per-query token cost.

- 2."BM25 is obsolete": BM25 is the default ranking algorithm in Elasticsearch and OpenSearch in 2026. It is deployed at larger scale than any neural retrieval system on the planet. It is not obsolete.

- 3."tsvector in Postgres is BM25": It isn't. Postgres's built-in

ts_ranklacks proper IDF weighting and term-frequency saturation. For true BM25 in Postgres, you need ParadeDB'spg_search, Tiger Data'spg_textsearch, or VectorChord-BM25. The difference matters when document lengths vary significantly. - 4."98.7% accuracy means vectorless is always better": That number comes from a vendor's evaluation of their own product on a benchmark designed around a strength (single structured financial documents). On other benchmarks and corpus types, the results flip. Always evaluate on your data.

- 5."I need Elasticsearch for BM25": If you're already on Postgres and your corpus is under ~500k documents, Postgres with a GIN-indexed tsvector or ParadeDB is competitive with Elasticsearch — without the operational overhead of a separate cluster.

Practical Decision Guide: What to Build

Here's what I'd actually recommend based on your situation:

- 1.Starting a new AI feature on an existing Rails app: Start with

pg_search(BM25 via Postgres) + agentic keyword tool. Get to 80% quality in days, not weeks. Evaluate before reaching for a vector DB. - 2.Building on structured enterprise data (contracts, financial reports, compliance docs): Try PageIndex-style tree retrieval. The accuracy gains on hierarchy-rich documents are real and significant.

- 3.Working with a large corpus (100k+ docs) with conceptual/synonym-heavy queries: Vector RAG or hybrid (BM25 + pgvector + RRF) is likely your best bet.

- 4.Need precise answers from relational/tabular data: Text-to-SQL. Don't chunk your database — query it.

- 5.Need to handle "find this specific clause" AND "summarise how all our contracts handle liability": GraphRAG. It's the only approach that handles global sensemaking well.

- 6.Unsure?: Build hybrid from day one. BM25 is almost free to add if you're on Postgres. Add

pgvectoralongside it. RRF is 10 lines of SQL.

Conclusion

The "you need a vector database for RAG" assumption is worth challenging — not because vectors are bad, but because the right tool depends entirely on your corpus, your queries, and your constraints.

Vectorless RAG is not a new technology. BM25 has been powering enterprise search for 30 years. What's new is the recognition that LLM-based reasoning can close the gap that lexical search leaves open — through iterative search, tree traversal, and multi-step reasoning — without dense embedding infrastructure.

For most Rails teams building their first RAG feature, the stack I'd reach for in 2026 is:

- 1.PostgreSQL: with

pg_searchor ParadeDB for BM25. - 2.Agentic keyword search: as the retrieval pattern.

- 3.pgvector: added when benchmarks show a semantic gap.

- 4.RRF hybrid: as the production-hardened default.

The vector database stays optional until the data proves otherwise.